Variable Ratio: You’ll Get it! I Promise!

Variable Ratio (VR) is probably going to be the most used tool in your toolbelt.

Compared to the other Schedules of Reinforcement, Variable Ratio tends to fit the bill for the kinds of behavior you want to use.

Just like in my previous articles, let’s break down the schedule with its name first. Variable just means randomized, while Ratio means how often the behavior is done. With this in mind, Variable Ratio means you are providing a reward after a randomized number of times the desired behavior has been performed.

Due to the variable part of this reinforcement schedule, this is one of, if not, the most resistant kind of schedule you can use. Meaning, this behavior is resistant to extinction. Further breaking that down, when you start using this schedule, there is a good chance the behavior you are trying to reinforce will stick around for a long, long time.

In scientific notation, you’re going to see people mention this like “VR10.” What this means is the subject is reinforced at randomized times based on behavior, but the number of responses to get a reinforcer will average out to 10.

Let’s dive into some examples.

Everyday Examples

This is best seen in slot machines and most forms of gambling. You, as the gambler, don’t know when you’re going to get your next reinforcer. So you try and try again, I mean, the next one could be the jackpot right?

This idea of “the next one” is also what fuels most variable ratio based behaviors. The idea that the next one is going to be the big hit. Animals and humans are susceptible to this and as a result, behaviors trained under this will be very easy to control.

But as gambling goes, the behavior being reinforced is putting money into the machine. From there, you’re being reinforced at almost randomized times in order to keep you feeding money into the machine. This is variable ratio at work. You’re being rewarded at a random amount of time for the effort you’re putting in.

Rewards used in casinos are also interesting cause, as noted by Karen Pryor in her book, animals and humans also have an interesting response to, what she calls, the “Jackpot effect.” It’s the idea that if you do a behavior and you win an extremely large reward, you’re more likely to do that behavior again even if you get nothing for the next few times. Personally, I put this under variable ratio since your subject is being rewarded based on an action, and they don’t know when they will receive their next reinforcement, or even what size their reinforcement will be.

In a casino setting, a single larger than average winning encourages the gambler to continue gambling in the future.

Card booster packs also use Variable Ratio, since they’re encouraging you to spend money and you don’t know which pack will eventually get you the card (reinforcer) you want. But eventually, with enough booster pack purchases, you will get the card you want. Gacha games also operate on this same concept as well.

On the note of games, you’ll see this all the time in video games. In most games, anything that’s based on a random loot drop is putting its players on a variable ratio schedule. Besides obvious examples in games like World of Warcraft, Monster Hunter World, and Final Fantasy, you can see this in games like Pokemon Go!, where players are rewarded for walking with a pokemon spawn. You don’t know which ones will spawn near you (there are some fixed spawn points, which in that sense are more like a schedule of Fixed Schedule), and as a result, players are encouraged to both keep the app on, and travel more with it.

Features of Variable Ratio

As I’ve said before, Variable Ratio is useful because the variable part of this schedule creates an extremely resistant form of behavior – it’s going to stick around for a while.

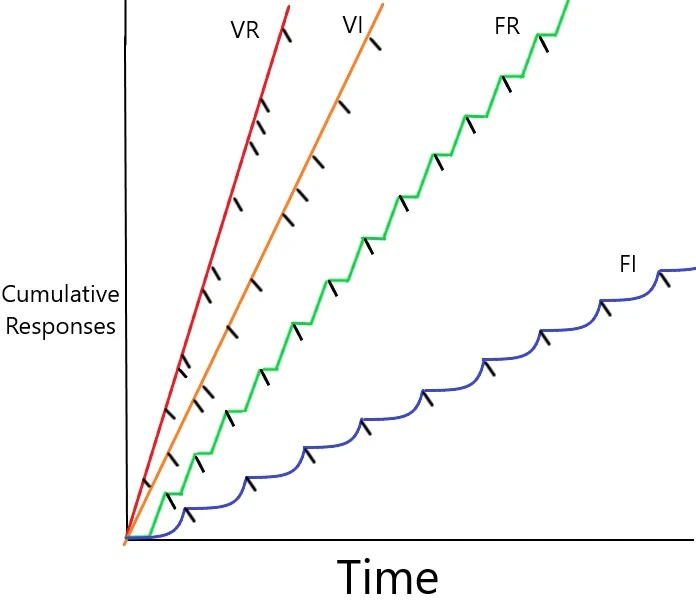

Let’s go back to the chart to see what the response rate of Variable Ratio is like…

As you can see, this schedule of reinforcement has the highest response rate out of all the schedules. Subjects have a tendency to take very short breaks even after being reinforced. The response rate is also consistent and frequent compared to other schedules.

Other Examples

You’re going to see this kind of schedule in almost every form of animal training. I’d argue you’ll be hard pressed to find competent trainers using anything other than variable ratio just because of how useful it is to teach and maintain behaviors.

In dogs and cats, variable ratio schedules can be used with very few treats (great for maintaining an animal’s body weight), and also train behaviors extremely quickly (great if you’re in a crunch). But don’t let its speed and expediency make you think this method is cheap. While this can help animals learn quickly, it means you can do more in your training session with your subject.

Closing Thoughts

I love Variable Ratio schedules. I’ve used this the most with the majority of the animals I’ve trained.

If there was a place to start for animal training, start with this. Once your subject isn’t sure when they’ll be getting their reinforcer, then you can start strengthening older behaviors and start new behaviors on a continuous reinforcement schedule.